I hope to show that . . . iatrogenesis has become medically irreversible: a feature built right into the medical endeavor. The unwanted physiological, social, and psychological by-products of diagnostic and therapeutic progress have become resistant to medical remedies. New devices, approaches, and organizational arrangements, which are conceived as remedies for clinical and social iatrogenesis, themselves tend to become pathogens contributing to the new epidemic. Technical and managerial measures taken on any level to avoid damaging the patient by his treatment tend to engender a self-reinforcing iatrogenic loop analogous to the escalating destruction generated by polluting procedures used as antipollution devices.

-Ivan Illich, 1976

It’s a long one, y’all. Buckle in. Or hit the “back” arrow. Your time, your choice.

Satanic Panic

In 1983, an alcoholic mother who suffered from paranoid schizophrenia walked into the Manhattan Beach, California Police station and reported that her two-year-old son had been sodomized by her soon-to-be ex-husband and one of her son’s preschool minders. This was based on her son having a painful bowel movement. The claim was elaborated upon with wildly incoherent tales of Satanic rituals, including people flying through the air.

Three years earlier, a quack psychiatrist named Lawrence Pazder and his patient (and later, wife), Michelle Smith, had published a salacious bestseller called Michelle Remembers, in which they claimed that, through “therapy,” they’d “recovered” Michelle’s “repressed memories” of childhood ritual sexual abuse at the hands of Satanists to which she’d been handed over by her mother. It was horse shit from A to Z, but it ignited a mass delirium over supposedly widespread Satanic horrors going on right under our noses; just the kind of thing that a schizophrenic mom with an alcohol addiction might latch onto with both hands and her teeth.

The mentally ill mother was Judy Johnson (she died three years later from complications of her alcoholism), and—incredibly—the Manhattan Beach Police and Los Angeles County Prosecutor’s Office would come to take this allegation seriously and initiate an investigation, followed by arrests and trials.

The first accused, Ray Buckey, as well as his family and three teachers (Virginia McMartin, Peggy McMartin Buckey, Peggy Ann Buckey, Mary Ann Jackson, Betty Raidor, and Babette Spitler), would eventually be jailed and subjected to the most expensive trial in US history (48 accusers and 321 counts of child abuse), after the cultural contagion spread to other families with children in the McMartin Preschool. This mass lunacy was validated by another quack therapist—Kee McFarlane. McFarlane, following in Pazder’s footsteps, “worked” with the children to elicit even more (and ever wilder) allegations of Satanic sexual abuse against the McMartins, again using highly suggestive forms of . . . basically, brainwashing . . . with the kids to manufacture “recovered memories” of the alleged abuse. The chief propagandist for this travesty was McFarlane’s lover, the “journalist,” Wayne Satz. The maniacally zealous, unethical, and still unrepentant prosecutor was Lael Rubin, who was found to have withheld exculpatory evidence, and should have been charged afterward with prosecutorial misconduct. The McMartin’s were eventually turned loose by a hung jury in 1990, but not before they’d had their lives destroyed. There’s a very good 1995 film about this whole horrific sham, called Indictment, that’s well worth your time.

The Satanic panic, by the way, has waxed and waned, but never really gone away. Remember Pizzagate?

In the course of the late 1980s and through the 1990s—even persisting to a smaller degree today, the panic spread like the Maui wildfire. By 1994, in the US alone (this mass delusion had also gone international) there were more than 12,000 claims of Satanic ritual abuse (none ever corroborated). Nonetheless, thousands of parents, other relatives, carers, and neighbors were subjected to false accusations of ritual child abuse, a charge that even after exoneration had (and has) a tendency to stick. These lives were ruined by utterly false allegations, coached out of accusers by unscrupulous and-or deranged clinicians—from MSWs with a couple of courses on counseling, to clinical psychologists, and even to a few unethical/incompetent psychiatrists. All these so-called clinicians were dealing in a counterfeit currency called “repressed/recovered memory.”

Repression

Before we tackle this subject, we’ll first need to review the idea of psychological repression as it was first popularized by Sigmund Freud. Freud and his mentor, Josef Breuer, had, in working with female “hysterics” (now a debunked diagnosis), hypothesized that what might have been organic difficulties (like schizophrenia) or neurotic adaptations to the life circumstances of certain late nineteenth century women, were actually the result of “trauma,” the memory of which had been “repressed” (or blocked) as a defense mechanism. If this all sounds both familiar and plausible, it’s because we’re still culturally indoctrinated into versions of this bunk.

It’s what I call the steam boiler model of psychology. Steam boilers were powering the new world back then, so it was as easy an analogy to make as the way we do now by comparing human beings to computers.

Trauma, in this model, was some physical or psychological shock in the past that then became blocked, or “repressed.” Early on, Freud assumed the trauma of all female “hysterics” was caused by childhood “seduction,” or what we now call sexual abuse. He later let that particularity go, but what he maintained was the notion that “neurosis”—a notion as ineffable than “trauma”—was caused by some form or another (he had three) of “repression.” (Freud himself eventually abandoned this notion, though many who followed held onto it like a dog with a bone.)

Neurosis covers a host of anxiety-based and maladaptive attitudes and practices. The person—or steam boiler—by holding these blocked memories of “trauma” in the unconscious—was in danger of a breakdown from a kind of pressure build-up that was interfering with the proper function of the machine (possibly even an explosion). The trick, then, for a steam boiler, is to install a pressure valve (like you have on your pressure cooker); and the trick for neurotic of hysterical patients was for the analyst/therapist to “talk” the patient into recollection of the trauma, whereupon the patient could experience a catharsis . . . a letting off of the pressure. One famous patient even called it the “talking cure.”

I have to take a detour here—sorry—and some readers will recognize this from The Battle of Woke Hill.

In the 1930s, William Reich would marry this “Freudian” steam-boiler-repression idea to politics. Reich, the author of The Sexual Revolution, popularized the bizarre notion that fascism was an outcome of sexual repression—as if Mussolini wasn’t a world class womanizer who ejaculated more often than he slept, or as if all the Italians who followed him were time bombs of “sexual repression.”

In Reich, the transposition of Marxist conflict theory—the bourgeoisie versus the proletariat—onto a polarity of sexual repression versus sexual liberation, set up sex as the battering ram that would begin the assault not just on “repressive traditions” and old guard economic socialism (which had been very sexually conservative), but against “religion,” understood through the lens of the prevailing post-war ideological postulate of a polarity called religious versus secular (question-begging on a grand historical scale).

Reich’s thought is based on the premise . . . that there is no order of ends, no meta-empirical authority of values. Any trace not just of Christianity but of “idealism” in the broadest sense . . . is eliminated. What is man reduced to, then, if not to a bundle of physical needs? When these needs are satisfied — when, in short, every repression is removed — he will be happy . . . Having taken away every order of ends and eliminated every authority of values, all that is left is vital energy, which can be identified with sexuality . . . Hence, the core element of life will be sexual happiness. And since full sexual satisfaction is possible, happiness is within reach. (Augusto Del Noce)

“Religion” wrote Reich, “should not be fought, but any interference with the right to carry the findings of natural science to the masses and with the attempts to secure their sexual happiness should not be tolerated.” (emphasis added . . . perhaps Reich’s latent authoritarianism was caused by his lack of sexual liberation)

The human being is reduced to a psychosoma—a mind-body, a “bundle of needs,” the satisfaction of which can produce the only happiness left to someone reduced to a body. What we’re seeking then is to maximize or optimize this bare psychosomatic life through the production of vitality. In the scientistic absence of and opposition to transcendence, there can no longer be any moral outlook that reaches beyond vitality. This ideology, vitalism, then, absorbs morality. The scientistically-reduced psychosoma as sexual steam-boiler. If too much pressure (created by the blockages of “repression”) builds up, the machine will explode (into fascism, one presumes). I’ve been monitoring my friends among the celibate Dominican Sisters for years now for this dangerous pressure build-up, but alas no explosions have been forthcoming. Pictured below: ticking Dominican time bombs.

Sorry, just had to. Okay, back to Freud, trauma, repression, and memory.

The basic template left by Freud and picked up by subsequent generations was that “trauma” (however defined) leads to “repression” (however defined) of the “memory” (however defined) of the trauma . . . which has to be then coaxed back through the patient’s pressure valve by a clinically trained boiler technician.

I’ll be the first to admit my biases about therapy, which have been formed by my own brief, but none too stellar, experiences with it. And I’ll try not to dismiss therapies altogether, because people I know and trust have said they benefited from certain forms of therapy, and I have to believe them. So it is in no way an indictment of therapy writ large when I say, based on my own first hand and second hand knowledge, as well as a mountain of evidence, that, in spite of the good therapists, there is also a profusion of charlatans, quacks, and incompetents. We saw some of that with the whole Satanic panic, which enlisted a brigade of malignant nincompoops coaching countless children and adults into “remembering” things that never happened. They left a damage path of lingering false accusations and broken families in their wake.

Now, I don’t for one moment doubt that certain memories are so painful or frightening that people find ways to avoid them. In fact, not only do I not see this avoidance as pathological, it’s just plain adaptive. I strongly recommend it. Yes, avoid dwelling on the worst shit that happened to you. Why in the hell would anyone want to dwell on something that distresses them? (No, you won’t blow up.)

There’s no doubt that people forget things. In fact, we forget most things. We’ll cover that in greater detail below with a section on remembering. Likewise, people do have bad experiences, the memories of which they avoid or “let go” by simply not thinking about them for a long time. But people do not forget (or have locked away) their most intense experiences, and they don’t forget intense experiences that are sustained and repeated, unless there is some organic problem, like a severe head injury. We don’t forget about the deaths of loved ones. We don’t forget beatings, or rapes, or intense combat, or a home burning down, or a divorce, or an abortion, or when we euthanized a pet. We just don’t.

And, in fact, many of us, in dealing with what are now called traumatic events, begin processing said events immediately, almost obsessively, reliving them in our minds again and again and again. Cultures have long recognized the necessity of this “trauma” recycling, painful as it is, as a way of grinding the sharpest edges off of the experience so we can get on with our lives—like sitting Shiva. We don’t “bury” the experiences, we handle them, dive down into them, replay them, until they’re dulled enough to get on with things. We especially don’t repress them into a form of amnesia.

In fact, now is a good time to take a moment to detour through this notion of trauma.

Trauma

I was, once upon a time, trained in what is called Advanced Trauma Life Support (ATLS). This is training for ER docs and nurses, but in my case it was part of our training as Special Forces medics; and trauma in that context meant bodily injuries. What happens when one is shot or wounded in explosions, crushed in a car wreck, poisoned, mauled by a dog, had a long fall, or an accident with a nail gun, whatever.

Also while I was in the Army, what was once called shell shock—later called combat stress reaction—came to be called post-traumatic stress disorder (PTSD). In this case, the word trauma only anecdotally has anything to do with physical wounds, inasmuch as one might be found to suffer from PTSD without having sustained any physical injuries at all. “Shell shock” is actually more immediate, and one can still observe a series of almost autonomic reactions in people who are facing extreme danger, From fight-or-flight, they transition into a “frozen” state beyond panic. This is physiological, with some ancient history as adaptive, I’m sure. But when we talk of trauma in the context of PTSD, we are naming something that happens long after the danger has passed, and the physiology has settled.

No one is saying that these post-event experiences are all unreal or a product of suggestion, though some are (as we’ll show). But they are more on the order of conditioned reflexes than they are of memory; and they do dissipate with time.

What we are talking about, however, is how trauma had been metaphorically stretched into the broad domain of psychology—as a mental state, a disturbance of the mind (a phenomenon that scientism—to which we’ll return—disingenuously denies). Trauma was further stretched from response to something bad happening to a person to witnessing something bad. The trauma “stretch factor.”

On “scientism”—clumsy segue here into a short excursus on something we’ll tackle later—it’s not science (the practice), but an ideology, which means here that I don’t reject scientific explanation as long as its stays in its lane. In fact, I quite like science, the practice, and I think it has great value. In the first part of my book, Borderline, on gender and warfare, I included a bit about Robert Sapolsky and Lisa Share’s scientific research on the physiological symptoms of psychological stress associated with physical abuse, while studying a troop of baboons. I used their study to make a case against pure biological determinism—another path for another time—but what they found, which is not surprising in the least, is that baboons (and by extension other primates, like us) have physiological reactions to being bullied and terrorized (as the troop was by a few dominant males). They also suggested, again obviously, that there are physiological reactions to psycho-trauma, or stress, or being distressed, or whatever you call it. Duh.

On the other hand, we now have young college students, whose lives have been, for the most part, easy and affluent—nothing even in the range of what life is like for Palestinians in Gaza, displaced Syrians, poor Haitians, or folks living on the Pine Ridge Reservation. These affluent American students are claiming to be traumatized (even “harmed”—another now-stretchy term) by everyday language that they simply dislike or feel they have to challenge as a demonstration of in-group virtue.

Somewhere between the purely physiological interpretation of psychological “trauma” and the bourgeois snowflake version of so-called “harm” are the countless forms of psychological distress, now encompassed by the thinly spread term, trauma. Psycho-trauma began its life as that “metaphorical extension” from physical trauma in the nineteenth century among the psychiatrists of the time. I’m going out on a limb, as you’ll soon see, by saying the invention of this “trauma” was not a discovery of something already there, but a faulty metaphor that was then reified, and which spread—like a highly transmissible lab-leaked virus—into the general population through repetition and suggestion, whereupon psycho-trauma became a cultural consensus.

(And yes, distress will will sometimes correspond to memory errors and memory “loss,” but it does not follow that “trauma” leads to “dissociation”—another term we’re about to attack).

The Freudian/post-Freudian metaphor of trauma has come to be assumed as simultaneously an inevitable experience in life and the covert causative agent for a menu of “disorders,” which may seem contradictory to many of us rubes, but it’s certainly a ready-made formula for fat bags of billable clinician hours.

If I may personally digress, as to PTSD, most of what are called disorders (a sly term indeed) in response to things like combat stress and rape are actually quite normal and even rational responses to violent life-threatening and life-changing events.

PTSD

Now me, I have nightmares sometimes.

You know what? Everyone has nightmares sometimes. But, given that I was a career combat soldier, beginning as a parachute infantryman in Vietnam and progressing through seven other conflicts, people like to tell me that my nightmares are a result of PTSD. It’s a diagnosis in search of confirmative symptoms. In fact, most of my nightmares, when I do have them, are not about combat at all, and many of them are nonsensical (upon waking) but accompanied by feelings of dread during the dream. For all I know, a nightmare might be “triggered” (another term I’ll take to the woodshed) by eating too much salt before bed, by binge watching some violent television show, or from obsessing about a Facebook post . . . or nothing at all . . . it might be just how one’s more narrative, waking consciousness randomly unwinds and swirls together as we drift into a REM state.

I’ve never had hallucinatory flashbacks of actual events playing back like a video recording and neither has anyone else—it’s a film trope, people. I do have a tendency to get low (duck, prepare to hit the prone) if I hear a sudden, extremely loud noise nearby (but that’s a reflex, a trained one in fact, not a damn flashback).

PTSD does not meet even the baseline criteria of disease diagnosis. On the Mayo Clinic’s site, the list of “symptoms” for PTSD are entirely derived from subjective reporting, and they consist of many reported experiences/feelings that are not actually pathological. “Distressing memories of the event”?

Are there any memories of scary and-or painful events that are welcome and pleasurable? Distress when something reminds you of the event? Do these kinds of “symptoms” (again, they are “symptoms” in order to make them conform to a presumed diagnosis) qualify as pathological, or are they normal responses to unpleasant memories? “Avoidance of thinking or talking about the traumatic event,” and avoidance of “places, activities or people that remind you of the traumatic event”? Hell yes, we avoid them!

I know that in my own most non-pathological moments, I seek out reminders of the worst things I’ve ever experienced. Right.

“Negative thoughts about yourself, other people or the world; Hopelessness about the future; Memory problems, including not remembering important aspects of the traumatic event; Difficulty maintaining close relationships; Feeling detached from family and friends; Lack of interest in activities you once enjoyed; Difficulty experiencing positive emotions; Feeling emotionally numb.”

I know plenty of people who’ve never been in combat, never been attacked or raped, never been in some horrific accident, who experience all of these (apart from memory of some specific event): academics, poor people, drug addicts, and so on . . . in fact, most of these so-called symptoms are part and parcel of living in an increasingly precarious, commodified, and alienated society!

The “diagnosis” of PTSD is not scientifically sound at all, but a form of cultural consensus. The authoritative text on so-called mental disorders is the Diagnostic and Statistical Manual (DSM, there are historical revisions ranging from 1 to 5 to 5-TR), which is not, and does not claim to be, a scientific document, but a consensus document, which is revised and republished by combative committees.

There was once a consensus that smoking cigarettes aided digestion.

Medical history includes a history of remarkably wrong, and often dangerous, ideas and practices; and there’s no reason today to assume that the generation of wrong ideas by “educated people” has come to an end. We trust medicine, in part, because it has a great PR department. Not to say that there aren’t efficacious and beneficial medical practices, but to point out that there is also a question of what Ivan Illich called iatrogenesis—harm caused by the “treatments.” Most of what I’ll discuss in this piece is about iatrogenesis.

Not only is PTSD a diagnosis seen through a glass darkly, it’s culturally contagious. There are actual incentives for claiming to (and even coming to believe) that one “has” PTSD, in instances where the culture rewards “tragi-heroic victims” with esteem, attention, and sympathy.

In 1983, the Veterans Administration published the National Vietnam Veterans Readjustment Study (NVVRS). One aspect of that study was “PTSD.” In 2006, Dohernwend et al revisited the study, contacted many of the original participants, and studied the participants’ actual military personnel files (Dohrenwend was one of the consultants on the original NVVRS project). In the original study, 30.9 percent of respondents were diagnosed with having had (past tense) “full-blown” PTSD, 53.4 percent with either full-blown or “partial” PTSD, and 15.2 percent currently suffering (now a decade and half to two decades after the war) from full-blown PTSD.

For starters, refer back to the diagnostic symptoms above, pursuant to Mayo, then think of what other causes (or co-morbidities) might have accounted for those symptoms, which were ignored by the NVVRS. That’s an aside from the point I’m aiming at, but an important one. (see Dr. Richard McNally, director of clinical training at Harvard University's department of psychology, one of whose presentations I used for this summary.)

Here’s the real issue (okay it’s statistics, sorry). 2.8 million US troops served in Southeast Asia during the US occupation of Vietnam. Only 12.5 percent served in combat roles. In other words, around seven out of eight troops who went to Vietnam were never in combat of any kind. They had access to nice facilities, showered every day, put on clean uniforms, put up with all the non-hazardous, everyday bureaucratically-distorted bullshit in the military, and rested comfortably each night. So, how in the hell did more than half of returnees have or have had PTSD two decades later in 1983? Here’s my answer. Robert DeNiro and Sylvester Stallone!

The tragic and psychologically wounded Vietnam antihero-veteran was the fashionably edgy new Hollywood archetype—what we had before clickbait. Taxi Driver, Deer Hunter, and First Blood came out in1976, 1978, and 1982 respectively. All three films were horse shit from beginning to end, but suddenly being a Vietnam Vet™ was transferring cultural capital into your account. The public, including many veterans, lapped this stuff up like poodles at a milk trough. (First Blood was the most egregious, with its phony “spat upon veteran” myth, and its fascistic “stabbed in the back” sub-narrative.)

[Want to know what contributed to the Satanic panic? Rosemary’s Baby (1968), The Exorcist (1973, falsely claimed by the book author to be roughly based on a true story), and The Omen (1976).]

When Dohernwend et al reviewed and revisited the NVVRS, they started with three valid assumptions. (1) Being exposed to “trauma” (the elastic category they accepted more readily than I do) does not correspond 1-to-1 with having PTSD, because most people exposed to trauma—as ascertained through a review of the studies—do not develop PTSD (they may now, because some clinician tells them so). (2) There is a “dose-response effect,” that is, a guy who spent a solid week in an intense combat on Hamburger Hill will have been far more likely to experience post-event difficulties, attributable to the event itself, than a guy who was in a two hour firefight, and the guy in the two-hour firefight is more likely to show more “symptoms” than the medical corpsman back in the safe firebase who offloads the casualties from helicopters. (3) Not all emotional changes occurring in a war zone are pathological. Hyper-vigilance, for example, is sometimes not only appropriate, but necessary.

Dohernwend et al also accepted PTSD as a diagnosis, unlike me, but they set three criteria (two for ruling out) for acceptance of the diagnosis. (1) They threw out cases where there was no demonstrable exposure to real traumatic events. (2) They reviewed military records for corroboration, without which they rejected the diagnosis. (Every big thing one does in the military is recorded in periodic evaluations, unless the operations are highly classified « this does not apply to the vast majority of operations.) They dropped 59 percent of the cases on this criterion alone (guys were fabricating often wild stories, as vets still often do). (3) They substituted something called the Global Assessment of Functioning (GAF) rating (of impairment) for the DSM-IV criterion of “distress.” Impairment is something closer to any diagnosis of pathology than mere distress. (I was distressed when my dog woke me from a nap.)

These steps were taken because, as military psychiatric historian Ben Shepard had noted in an article published by the Journal of Anxiety Disorders, the NVVRS published “numbers which are in any other context patently absurd.”

The study of the study concluded that the NVVRS had overestimated the incidence of PTSD by at least 40 percent.

In my opinion (that and two bucks will get you a cup of bad coffee), Dohernwend et al were themselves too cautious at cutting. They gave more weight to the lower end of the GAF impairment scale than what it deserved. The scale then went 1-to-9, with 9 being unimpaired and 1 being utterly dysfunctional (it has since been modified from nine to ten standards). They declared a subject sufficiently impaired to assign the diagnosis of PTSD all the way from 1 through 7. Back then, a 7 meant “some difficulty in social, occupational, or school functioning, but functioning pretty well, has some meaningful interpersonal relationships . . . some mild symptoms.” Uh . . . does this describe you as well as it describes me? Do we need drugs and therapy? If they had cut off at 6, instead of 7, for example, the numbers would be thus: Current PTSD (late 1980s): NVVRS = 15.2%—Dohernwend 1 - 7 = 9.1%—Dohernwend 1 - 6 = 5.4%. Note that even with this, we are still not accounting for all the possible causes/co-morbidity that might have crept into these people’s lives over the twenty plus years since they’d been to Vietnam (mostly for only 11-12 months, and during those months—even for combat troops—active combat was only episodic).

And yet, nowadays, I can go right down to my doc, tell her I’m retired Army and I have bad dreams sometimes, and I can secure some psychoactive drugs and a string of appointments with overpriced counselors.

Diagnostic creep is a real and iatrogenetic thing.

Why, then, this excursus on PTSD, and why am I throwing so much shade on “trauma”? Well, it’s because (1) I don’t have absolute trust in what is now called the “health care industry,” (2) I’m a pretty street-wise and world-weary septuagenarian who’s seen more hustles that Carter had little liver pills, (3) I, as well as many people I know, have “been exposed” to what is now called (metaphorical stretch factor) “trauma,” and most of it is just part of life to which we adapt between sperm and worm, and which need not and ought not be medicalized, and (4) I’ve found sufficient and reliable evidence during my research to substantiate my experiential intuition that stretch-factor “trauma” is culturally constructed (here, I am definitely a constructivist) through manipulation of our vulnerability to suggestion as well as diagnostic creep and cultural contagion (yes, I know, those are metaphors, too).

In the interstices of diagnostic creep and cultural contagion assumptions grow like the mold in your shower grout.

The clinicians who were complicit in the “Satanic panic” assumed that metaphorical trauma resulted in a kind of magical amnesia—and went in search of the lost memories they’d already assumed existed.

Tentative intermediate conclusion: Any clinician who uses suggestive techniques with his or her patients/clients rates high on my index of suspicion. They’re not there to suggest, and suggestion (ahem) suggests an agenda (on the part of the clinician). Agendas are supported and advanced far too often by manipulation.

One of the most dangerous suggestive tools agenda-driven clinicians use is hypnosis.

Onward.

Trance & Suggestion

Trance is defined in medical terms as a state of semi-consciousness within which a person decouples from normal, waking sensory processing. By this definition, we might designate as trance any number of experiences. That period of half-wakefulness before one gets out of bed, or when one is falling asleep. Dreaming itself. Daydreaming. Meditation. Prayer. Phases in dementia. High concentration on a specific task. I “tranced” myself a few times back in the day with everything from Budweiser to opium to mescaline. In spite of the obvious elasticity of application for the word trance, for our purposes today, we will discuss hypnosis—a clinical technique for inducing a trance-like state in the patient/client using the power of suggestion.

As it always is with analytical excavations, we now have to stop and dig a bit deeper around that last word—suggestion, or in our case, suggestibility.

Suggestibility is defined as susceptibility or responsiveness to suggestion.

In the Matthew 5:37 (DBH), there’s a warning against manipulative speech. Jesus teaches his disciples to “Rather, let your utterance be ‘Yes, yes,’ ‘No, no’; because it is from the roguish man that anything more extravagant than this comes.”

Don’t manipulate others.

Human beings are manipulable, of course, or such an admonition wouldn’t make sense. One of those vulnerabilities to manipulation is vulnerability to suggestion. There’s an entire industry based on this vulnerability to suggestion, called advertising. Buy our car, and all your dreams of adventure and romance will come true. Politics is another obvious example.

It’s for this reason—and here I am making another editorial excursion—employing suggestion in any practice, especially in clinical settings, is a practice that’s pregnant with the possibility of abuse.

Hypnosis, in a clinical setting, is, by definition, manipulation by suggestion to induce a trance-like state. Apologists for hypnosis will tell you it’s just a state of deep relaxation, but I find that “just” modifier disingenuously deceptive. (No, I’m not coming out against all hypnotherapy.) Which means I might be one of those people who are less suggestible than others, or in plainer terms, I’m of a skeptical disposition.

Skepticism, in this case—and I am on record as someone who is far from what anyone would call a philosophical skeptic—means practical skepticism. This skeptical disposition, gained through experience in a culture where manipulation and exploitation are ubiquitous, accepted, and even justified, is a defense against the myriad hustles one encounters pretty much all the time. So my skepticism may be a bias, but it’s a damn well-founded one. It’s like wearing a head net when you cross a mosquito-ridden cedar swamp in early June. A pain in the ass and not pretty either . . . but it’s better than blood-drinking arthropods eating your face.

Hypnosis is defined as a “state of consciousness involving focused attention and reduced peripheral awareness characterized by an enhanced capacity for response to suggestion.” Change that term “capacity for response” to “capacity for vulnerabilityto suggestion” and see how it plays. It plays like the McMartin Preschool, where kids were subjected to relentless suggestion, through which “clinicians” implanted memories of things that never happened, and the mass panic went on to destroy countless lives and families.

Here is what psychotherapist Ivan Tyrrell has to say about hypnosis apologetics:

A half-truth is just as dangerous as a lie, even if offered with the best of intentions. Unfortunately a great many half-truths are spouted about hypnosis, and practitioners need to be careful not to promulgate them. They include the following.

• “Hypnosis is a natural state of relaxation and concentration, with a heightened awareness induced by suggestion”

It isn’t. As I have described, it is an artificial means of accessing the REM state, which can even be done violently by capturing attention with a sudden loud noise or startling movement.• “Hypnosis is safe with no unpleasant side effects”

It is far from safe. It is an extremely powerful process and anything powerful can be used to do harm as well as good. Some people feel dizzy or uneasy, even after a relaxing session. They may feel psychologically unnerved about being ‘out of control’, particularly if they didn’t like the suggestions that were made to them. The literature is full of unpleasant or even dangerous effects that have been experienced after hypnosis. They include extreme fatigue; antisocial acting out; anxiety; panic attacks; attention deficit; body/self-image distortions; comprehension/concentration loss; confusion; impaired coping skills; delusional thinking; depression; depersonalisation; dizziness; fearfulness; headache; insomnia; irritability; impaired or distorted memory; nausea and vomiting; uncontrolled weeping and many, many more.• “You will be aware of everything that is said to you”

Sometimes that is the case when someone is in a light trance but very often it is not, and that again parallels with dreaming since we don’t remember most of our dreams. When people go into a deep trance, they often have no memory of what the therapist said. That is not to say that they didn’t register it, but they cannot consciously recall it.• “Hypnosis has nothing to do with sleep – it is just an extremely relaxed state”

Clearly this is wrong because hypnosis is very directly related to sleep: the REM (dreaming) stage of sleep is the deepest trance state of all.• “A hypnotist cannot influence anyone to do anything against their will”

We know simply by delving into the history of hypnosis of many examples of unwanted influence. There are many modern day incidents, some of which are recorded on CCTV cameras, such as cashiers being hypnotised and handing over the money in their tills because they were put into a trance state, or people being shocked into trance and robbed in the street. Indeed, we have only to think of advertisers and politicians and rabble-rousers and gurus—all artificially induce the REM state in the people they wish to influence.• “A person’s own ‘moral code’ will protect them from doing anything against their own best interests”

There is no evidence that people can be relied upon not to do things against their own best interests and masses of evidence that they do so all the time. People’s moral codes are as flexible and changeable as the climate.• “The ‘unconscious’ is very wise”

I heard a hypnotherapist saying these exact words, in a lovely, caring tone, on a YouTube video. The unconscious is not necessarily wise at all. It is very much influenced by how we are brought up, our life experiences and the culture we live in and so on: our conditioning. As far as the unconscious mind is concerned the GIGO rule applies: garbage in, garbage out. Much of the therapeutic work done in trance is concerned with overriding automatic unconscious responses, altering unhealthy patterns and opening up limited perceptions.

Where the Satanic Panic phenomenon maps onto hypnosis and post-Freudian conceits about trauma and repression is with what became the “recovered memory movement.” Before we explore that, though, we need to briefly discuss “memory.”

Remembrance

In Psychology Today, I read: “Memory is the faculty by which the brain encodes, stores, and retrieves information.” This is, is my considered opinion, bullshit. They are describing the mind as a computer file folder. Once you begin with this model, everything you say afterward, if it is to maintain any kind of internal consistency, has to conform to this analogy. As to internal consistency, as we ought to know from experience, it’s often a bulwark against evidence from outside a closed ideational system that would challenge the core precepts of that system. This is how ideologies work; and anything from the outside that doesn’t fit is then forced to fit using only the categories that are allowed inside the system. Cultural tropes work this way, too. Sometimes they reflect reality, sometimes they selectively reflect reality, and sometimes they’re divorced altogether from reality.

So what is memory?

Is it, we might first ask, too broad a term to pin down with a tight definition . . like the ideological, pseudoscientific claim above, where within the folder are sub-folders, like “semantic,” “procedural,” “working, “sensory,” and “prospective”?

Science (real science) can give some extremely limited accounts of various physical phenomena associated with acts of remembering, telling us abut “synaptic gaps,” “neuronal pathways,” and the like; whereas scientism (the ideology) would seek to reduce the reification called Memory to these descriptions of physical phenomena. Like trying to describe a concert using metallurgy, wave propagation theory, and aural anatomy.

Pop culture (especially manufactured pop culture) reproduces ludicrous accounts of remembering. We’ve all been exposed to movies, for example, about “amnesia” (loss of “memory,” the Bourne Trilogy, e.g.), PTSD “flashbacks,” full-color audio-visual reminiscences accompanied by beatific smiles or expressions of horror, etc. etc. You follow. (Entertainment media trades in a psychology of suggestion that’s incorporated unknowingly into our own “ways of knowing” as real, like a roofie in your drink.)

Rather that deal with this subject as “memory,” a reification (treating an idea like an actual thing), I’ll treat the subject of remembering, a verb instead of a noun. When you think about the word re-member, it means putting back together; and what we ostensibly put back together by remembering are phenomena, patterns, processes, events, etc.

I don’t even have to think about the route to our local grocery store or my favored fishing lakes. I’ve assimilated the birthdays of most of our family members. I can make pizza dough without the use of a recipe. When I cast my line over one of those lakes, I don’t deliberate on the technique (“muscle memory,” neuronal pathways).

“Memories” degrade. I used to have a “muscle memory,” as a paratroop jumpmaster in the Army, of pre-jump inspections; but after almost thirty years, that “muscle memory,” along with the cognitive recall that accompanied it, have atrophied. A paratrooper now would probably be best off with me not performing that inspection.

I’m also pretty sure I’m not the only person who’s forgotten what he was about to do when passing from room to room. We forget things we intended moments ago.

As to more complex forms of remembering, these have a strong social component. I had an extended period during one part of my life away from my family of origin (which I regret to this day), where they’d sustained family stories through re-telling (along with certain amendments and embellishments) as a kind of collective remembering. I found that, upon returning to the fold, as it were, I had completely forgotten some of those stories (narratives, if you like), or my recollection of them was substantially different than the family consensus.

I’m staying in the first person here, because much remembering is phenomenological. I don’t have “memories” like files in a folder; I have something far more ephemeral, reconstructed in telling, swimming around in my mind/body—something absolutely irreducible to physics or biology, which bears not the least resemblance mechanical audio-visual reproductions.

There are, in fact, many qualitatively different ways of remembering, though all involve the elements of attention and receptivity. Many forms of remembering are also merged with the the agendas of desire, and so come to involve confabulation. We remember things falsely—partial falsehoods and outright falsehoods—because this falsification answers to some desire, even, in some cases, disordered desire . . . like shame, ambition, obsession, addiction, or attention-seeking. In rarer cases, mis-remembering is a symptom of an organic malfunction (think schizophrenia, dementia, or brain injury).

In jurisprudence, we have a thing called “eyewitness testimony,” given great weight by prosecutors, judges, and juries, in spite of the fact that study after study has shown that eyewitness recall is notoriously unreliable. Around 75 percent of confirmed wrongful convictions for rape and murder have been based on eyewitness testimony that was later overturned by more reliable evidence, especially DNA testing. Since 1989, 358 people have been rescued from death row in the US by DNA testing. 71 percent of them were convicted using eyewitness testimony.

“Memories” are initially formed in contexts inflected heavily by attention. If you consider right now what you’re paying attention to, then look around, you’ll get an idea about how many things are going on to which you’re not attending, and which will not be incorporated into your memory of this moment . . . if the moment itself is memorable at all.

The store clerk who was robbed at gunpoint remembers the gun quite clearly, but not the robber’s face or clothing. She wasn’t attending to the robber’s face; her attention was riveted on that deadly hardware standing in the door between her and the grim boatman. She certainly saw the robber’s face, but there’s no “memory” of it.

After an experience—and by that I mean again-and-again after an experience, because we’re constantly interrupted in our remembering—we begin constructing and reconstructing our recall of it. In that process, we add and subtract, fill in the gaps, adjust it to our self-image or the image we wish to project, and even have it relayed back to us by others and through other experiences in ways that amend how we remember that experience. Remembering (in the narrow form of making “memories,” as opposed to “remembering” how to tie one’s shoe) is affected by biases, self-interest, people-pleasing, stress, whatever.

How many of us have ever heard of “imagination inflation”? (I didn’t until I started this research.)

In the lat 1980’s, Lyn Goff (no relation) and Henry Roediger did a study of imagination inflation—defined as “the memory fallacy in which the mental picturing or imagining of an event that didn't occur increases the individual's confidence that the event actually occurred.” The title of their publication of results was “Imagination inflation for action events: Repeated imaginings lead to illusory recollections.” Here’s the abstract:

Investigated whether imagining may have deleterious consequences for memory. In 2 experiments, undergraduates heard simple action statements, and, in some conditions, they also performed the action or imagined performing the action. In a 2nd session that occurred at a later point (10 min, 24 hrs, 1 wk, or 2 wks later), Ss imagined performing actions 1, 3, or 5 times. Some imagined actions represented statements heard, imagined, or performed in the 1st session, whereas other statements were new in the 2nd session. During a 3rd (test) phase, Ss were instructed to recognize statements only if they had occurred during the 1st session and, if recognized, to tell whether the action statement had been carried out, imagined, or merely heard. The primary finding was that increasing the number of imaginings during the 2nd session caused Ss to remember later that they had performed an action during the 1st session when in fact they had not (imagination inflation). This outcome occurred both for statements that Ss had heard but not performed during the 1st session and for statements that had never been heard during the 1st session. Findings indicate that imagining performance of an action can cause its recollection as actually having been carried out. (PsycINFO Database Record (c) 2016, APA, all rights reserved)

Imagination inflation is one means by which “false memories” can be constructed and consolidated in a person’s mind as if they were the recollection of real events (like Satanic ritual sex abuse). This imagination inflation effect can be more pronounced with childhood memories, because children do not remember the way adults do. Remembering is a developmental aptitude.

We don’t remember the first couple of years of our lives, and we remember very little of the first several years. Let’s go back to basics: “memory” is not a videotape or a set of photographs, or a written record waiting to be re-read. Just as we develop (or actualize, in more Aristotelian terms) in a dialectic between organic development and experience with regard to physical strength, coordination, agility, as well as in cognitive, practical, and affective capacities, the practices of remembering are also processes of both organic development and progressive mastery.

Take memorization as an example if that’s not too refractive. Intentionally memorizing a list—say, of vocabulary words in new language—is something that is simply not possible for a one-year-old. She has neither the physiological, cognitive, nor affective capacity (yet) to self-consciously and intentionally defer present desires and overcome present aversions in pursuit of a future-imagined goal. Moreover, children have not developed the complex structures of meaning—linguistic and cognitive—by which to organize how they remember. They have little experience to compare with other experience to create meaning. This is common sense.

And every parent knows that as children grow, for some time those children’s “memories” are mixed together with fantasies. One has to attain a certain level of maturity before he or she can discern the difference between imagination and reality, or between representations (on television, e.g.) and reality. (As our society has become increasingly technologically infantilized, more and more physical adults display an impoverished capacity for this kind of discernment.)

As we grow and develop, then, we have to change the way we organize the practice of remembering, which causes us to “lose” many of those “memories” that were “organized” in more childlike fashion (a five-year-old can remember events from age two, for example, whereas an eighteen-year-old has lost any recall of them).

I watched a video when I prepared for this, where people who thought they were watching a film being made saw a staged collision between a moving car and a stationary one. Observers were then asked, within an hour, to estimate the speed of the moving car when it hit the parked one.

When the question was something like, “How fast was the red car going when it hit the yellow one?” reposndents consistently gave lower estimates than when asked, “How fast was the red car going when it crashed into the yellow one?” The difference between the words “hit” and “crashed” altered the memoris of the event.

This is called the “misinformation effect,” when directive or misleading information is smuggled into the narrative after the fact and changes the “memory.” One of the researchers who developed this concept was Dr. Elizabeth Loftus, who has also done experiments proving she could implant false memories in subjects; memories that still persisted as memories even after subjects were told what had been done. (I agree with Loftus generally in her conclusions, but I find her experiments to achieve them ethically questionable, as well as her willingness to deploy her insights on behalf of actual predators as a well-paid expert in trials. This “ethical flexibility” does not, however, disprove her research.)

Hopefully, dear reader, you catch my drift, and we needn’t spend much more time in this particular rabbit hole. Log story short, the idea of “recovering” intact and pristine memories from childhood, sometimes after decades, is, shall we say, problematic. Even as adults, we don’t remember most of the details of any event, we remember “landmarks,” we grab “handles,” and our complex remembrances are organized as habits and the narratives we and others have constructed around them.

What was the most common denominator during the “therapies” which led to “recovered memories” of “Satanic ritual abuse”? Hypnosis. “Clinicians,” who are more rightly characterized as dangerous quacks, were engaged in “directive therapy,” in which they used highly suggestive techniques, based on prior and unsubstantiated beliefs about the patient, based on clinicians’ agendas, to create “memories” of things that never happened.

In 1991, Spanos et al published a study that demonstrated the ability of hypnotists to “help patients recall” past lives . . . yep, before they were reincarnated into their present lives. The dynamic here was a feedback loop between client and therapist, where they co-participated in not only creating recollections of things that didn’t happen, but which convinced the client/patient that they really did happen . . . that the “memories” were real (imagination inflation).

Exposure of this lunacy did not, however, stop the broad acceptance of some of its worst aspects. One of those aspects was related not to remembering, but forgetting.

Dissociative Drivel

I want to recommend a novel/film: Primal Fear, a novel (1993) by William Diehl, which was turned into a film in 1996. Okay, I’m a bad man, because I’m also going to do a spoiler (watch it with friends, and experience their surprise at the end vicariously).

It’s a legal thriller in which a young man commits two brutal murders (one of an admittedly creepy dude), whereupon his attorney defends him based on what was then called “multiple personality syndrome,” later to be called “multiple personality disorder,” and finally landing in our current linguistic technocratic shit heap as “dissociative identity disorder.”

Promoting this magical notion? Films: Psycho (1960) and Sybil (1976). The latter was actually “based on the true story” (of a bullshit diagnosis).

In Primal Fear, after the murderer (the frail, stuttering Aaron Stampler) is declared not guilty based his “alter” ( the non-stuttering, aggressively confident “Roy”) having committed the crime . . . and he confesses to his lawyer (Martin Vail) that it was all an act. From the film script, by Steve Shagan & Ann Biderman:

AARON: Well, good for you, Marty.

I was gonna let it go.

You were looking so happy just now. I was thinking...

To tell you the truth, I'm glad you figured it.

'Cause I have been dying to tell you.

I just didn't know who you'd wanna hear it from.

Aaron or Roy, Roy or Aaron.

Well, I'll let you in on a client- attorney-privilege type of secret.

It don't matter who you hear it from. It's the same story.

I j-j-just...

had to kill Linda, Mr Vail.

That cunt just got what she deserved.

But...cutting up that son of a bitch Rushman...

...that was just a fucking work of art.

You're good. You are really good.

Yeah, I did get caught, though, didn't l?

MARTY: So there never... there never was a Roy?

AARON: Jesus Christ, Marty. If that's what you think, I'm disappointed in you.

There never was an Aaron, counsellor.

Come on, I thought you had it figured there at the end.

The way you put me on the stand like that, that was brilliant.

The whole "act-like-a-man" thing. I knew what you wanted.

It was like we were dancing, Marty!

- Guard. - Don't be like that, Marty.

We did it, man. We fucking did it. We're a great team, you and me.

You think I could've done this without you?

You're feeling angry because you started to care about old Aaron, but...

...love hurts, Marty. What can I say?

I'm just kidding, bud! I didn't mean to hurt your feelings!

What else was I supposed to do?!

You'll thank me down the road, 'cause this'll toughen you up, Martin Vail!

You hear me? That's a promise!“It’s like we were dancing, Marty.” This isn’t exactly the circular feedback loop between suggestive therapist and patient, but it echos. Another thing the film dramatizes, which happens in medical settings quite frequently, is malingering. From the National Library of Medicine:

Malingering is falsification or profound exaggeration of illness (physical or mental) to gain external benefits such as avoiding work or responsibility, seeking drugs, avoiding trial (law), seeking attention, avoiding military services, leave from school, paid leave from a job, among others.

There’s no doubt that a patient who would come into a therapeutic setting and make stuff up has some issues—malevolence, attention-seeking, drug-seeking, whatever—but that doesn’t change the fact that he or she is lying.

So, every “false memory” is not necessarily the suggestive product serving a quack therapist’s agenda. It can be an incompetent therapist being manipulated by a client.

The problem is, with issues of memory, which appear in the commons only as subjective reports, there is no way of falsifying them with additional evidence . . . a bottom line for any truly rigorous scientific conclusion.

We’ll return momentarily to “dissociative memory disorder,” and another one called “dissociative amnesia,” but first we need to unpack dissociation by itself. My helper here is Lawrence Patihis, senior lecturer, Department of Psychology, University of Portsmouth, UK. He has taken a very close look at the concept of psychological dissociation to see how it stands up as a concept to logical scrutiny. Much of what I’ll say on this—without making constant references back to him—is a restatement of his examination or inspired by reflections on it.

First of all, questioning the precision and utility of the concept of traumatic dissociation is not the same as denying that some “post-traumatic” experiences identified with the concept are real. I am going to challenge some “reported” experiences, but experiences called “dissociative” are not being dismissed wholesale as unreal experiences. It’s the concept that’s in the dock.

Dissociation—as its being discussed here—was first conceptualized in conjunction with the early development of hypnosis prior to the American Civil War. Based on anecdotal observation by practitioners, three general phenomena were inferred from observation which would later relate to our questionable concept: (1) a segment of time was experienced as “lost” (during the hypnotic trance), (2) the “self” was experienced as disembodied or “outside,” and (3) the subject was rendered highly suggestible. As time went by, these three phenomena were named dissociative amnesia, depersonalization/derealization, and absorption.

In early practice, these sessions were aimed at “hysterical” women, and involved curatives like magnets . . . yes, magnets. This was when the idea of literal “animal magnetism” was invented, by people who saw themselves as the vanguard against premodernity’s primitive superstitions. Anyway, as magnetic therapy went by the wayside, the idea of curative hypnosis survived through the next two centuries (and is still being used today to “recover memories” of Satanic ritual abuse and past lives).

The idea of “lost time,” a pivotal trope in Primal Fear, was never subjected to real scientific scrutiny, but took on its own life in clinical settings where the therapist was already engaged in suggestive practices. It’s just as likely, based on what’s been actually established in rigorous studies to date—and not an episode of Law and Order you saw once—that this “dissociative amnesia,” as “lost time” came to be called, is generated in the suggestive feedback loop between clinician and client based on clinician expectations or patient malingering . . . or both (dancing).

But note what happened: the idea of hypnotic dissociation migrated from the clinical setting to the “post-traumatic” scenario apart from hypnosis. So, now we have—pursuant to the Satanic panic—post-traumatic dissociative amnesia, an actual early Freudian category, building on the hypotheses of Jean-Martin Charcot and Pierre Janet).

In 1909, Boston neurologist Morton Prince, based on his sessions of hypnotherapy with an “hysterical” student, Clara Norton Fowler, extrapolated her reports of having more than one person inside her into an actual syndrome, then called “multiple personalities,” eventually coming to be re-named by some DSM editorial committee as “dissociative identity disorder.”

This idea fell out of favor, then fell back inton favor by turns, based on fashions in clinical psychology, which were themselves inflected by the power of movies and television (Sybil was a kind of watershed film). The idea was developed, assuming an argument from authority (hey, doctors say so!), and has never been confirmed through scientific experimentation, because subjective reports are unfalsifiable.

I’m going to paste in the Mayo Clinic’s entire description of dissociative disorders, which you may notice has only a list of symptoms. In medicine, symptoms—which are basically reports from the patient—are differentiated from signs—which are objective measures, like actual physical examination and lab results. With real diseases, like strep throat or advanced atherosclerosis, symptoms are just a set of guides in the search for signs, which rule out or confirm a diagnosis. There are no signs for dissociative disorders, and we’ll explain why after you read Mayo:

Dissociative disorders are mental health conditions that involve experiencing a loss of connection between thoughts, memories, feelings, surroundings, behavior and identity. These conditions include escape from reality in ways that are not wanted and not healthy. This causes problems in managing everyday life.

Dissociative disorders usually arise as a reaction to shocking, distressing or painful events and help push away difficult memories. Symptoms depend in part on the type of dissociative disorder and can range from memory loss to disconnected identities. Times of stress can worsen symptoms for a while, making them easier to see.

Treatment for dissociative disorders may include talk therapy, also called psychotherapy, and medicine. Treating dissociative disorders can be difficult, but many people learn new ways of coping and their lives get better.

Symptoms depend on the type of dissociative disorder, but may include:

A sense of being separated from yourself and your emotions.

Thinking that people and things around you are distorted and not real.

A blurred sense of your own identity.

Severe stress or problems in relationships, work or other important areas of life.

Not being able to cope well with emotional or work-related stress.

Memory loss, also called amnesia, of certain time periods, events, people and personal information.

Mental health problems, such as depression, anxiety, and suicidal thoughts and behaviors.

The American Psychiatric Association defines three major dissociative disorders: Depersonalization/derealization disorder, dissociative amnesia, and dissociative identity disorder.

Depersonalization/derealization disorder

Depersonalization involves a sense of separation from yourself or feeling like you're outside of yourself. You may feel as if you're seeing your actions, feelings, thoughts and self from a distance, like you're watching a movie. (DING!)

Derealization involves feeling that other people and things are separate from you and seem foggy or dreamlike. Time may seem to slow down or speed up. The world may seem unreal.

You may go through depersonalization, derealization or both. Symptoms, which can be very distressing, may last hours, days, weeks or months. They may come and go over many years. Or they may become ongoing.

Dissociative amnesia

The main symptom of dissociative amnesia is memory loss that's more severe than usual forgetfulness. The memory loss can't be explained by a medical condition. You can't recall information about yourself or events and people in your life, especially from a time when you felt shock, distress or pain. A bout of dissociative amnesia usually occurs suddenly. It may last minutes, hours, or rarely, months or years.

Dissociative amnesia can be specific to events in a certain time, such as intense combat. More rarely, it can involve complete loss of memory about yourself. It sometimes may involve travel or confused wandering away from your life. This confused wandering is called dissociative fugue.

Dissociative identity disorder

Formerly known as multiple personality disorder, this disorder involves "switching" to other identities. You may feel as if you have two or more people talking or living inside your head. You may feel like you're possessed by other identities.

Each identity may have a unique name, personal history and features. These identities sometimes include differences in voice, gender, mannerisms and even such physical qualities as the need for eyeglasses. There also are differences in how familiar each identity is with the others. Dissociative identity disorder usually also includes bouts of amnesia and often includes times of confused wandering.

All of us have two or more people living in our heads. Dozens, in fact, maybe hundreds. It’s called mimetic learning. There is actually a whole profession, the practice of which entails taking on an endless string of “alters.” It’s called acting (something all of us do from time to time, and what people displaying DID are doing . . . some as technical actors, some as method actors.)

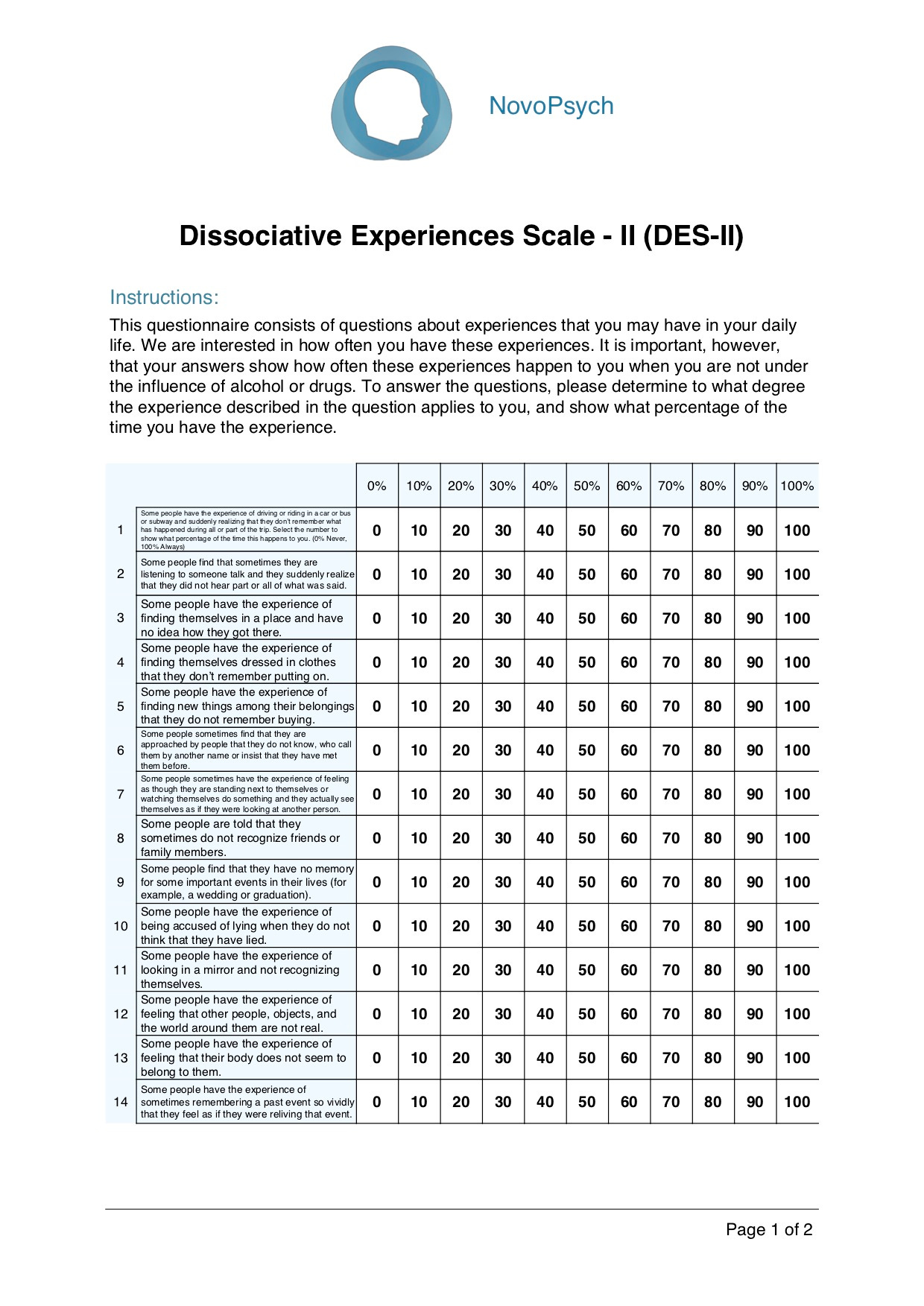

These symptoms are often elicited using the Dissociative Experiences Scale (DES), a self-assessment list of 28 questions, which employs three sub-scales: the amnesia factor, the depersonalization/derealization factor, and the absorption factor. Claims of its reliability and validity have to assume that (1) subjects are not suffering from co-morbidity or subject to any other influences at all, (2) that subjects are not displaying vulnerability to suggestion, and (3) that subjects are not malingering.

Advocates of the DES emphasize that it has a high degree of internal consistency. But we addressed this above. Every ideology has a high degree of internal consistency. Belief in an international Jewish conspiracy or the lizard people are internally highly consistent. Validity is constituted not by internal consistency, but by whether an hypothesis or an idea or even a tradition can survive relevant new evidence that undermines that internal consistency. By relevant, I mean to say, yes, anything can be “disproved” or at least challenged by arguments that have no commensurable postulates (see Alasdair MacIntyre); but the DES exists within, and claims to rest upon, scientific practice. By the rigorous standards of that practice, because DES interpretations are all unfalsifiable, it fails to meet the standards of reliability and validity. It’s internal consistency is inconsistent with the practical postulates of its general fields (scientific research, medicine).

We’ve given some space to “amnesia,” and we’ll unpack the other two sub-scales as we go through the DES; but before we even go there, the first untested and unproven assumption we need to point out is that these three sub-scales are presented as a bundled unit, called traumatic dissociation. This unbreakable trinity is a nineteenth century artifact that’s somehow remained unquestioned.

When Patahis and his colleagues compared DES results with another self-assessment scale, the Traumatic Experiences Checklist (TEC), which attempts to sort trauma into 29 different categories, which should give us pause about metaphorical “trauma” itself. In any case, while correlations were in places substantial enough to warrant firther inquiry, they were not sufficient to justify combining the three DES sub-scales together as single phenomenon called dissociation. Even then, my own caveats about self-reports still standing, the statistical evidence—as fuzzy as it is—does not sustain the three-part package called traumatic dissociation.

And does the entirely subjective construction of these two self-assessments, along with cultural exposure to these media-popularized notions, tend to create and inflate much of the correlation that’s there? Pretty much, yeah.

Let’s look at the construction of the DES.

Note first of all from this sample page that the subject has to choose percentiles of time-spent (with no clarifications) “experiencing” X, Y, or Z, which is itself a kind of mini-vignette . . . “the experience of sometimes remembering” . . . Not only does it ask the respondent—who sees a title for each section designed deceives him/her as to the actual “symptom” being assessed by the clinician—to employ what we’ve already established is constructed-memory (as opposed to quasi-photographic recall) to assign a numerical value to the amount of time they spend doing something vaguely stated, when, if they actually do these things, and if they actually can (remember “vividly,” as if this means accurately, e.g.), they aren’t preoccupied during the experiences themselves with their timing. Reader, how much time have you spent in the last week thinking about sex? Or food? Or traffic? To make things worse, many of the questions are asking the respondent to remember what they forgot! Or “feelings”!

Part of one of my jobs was event organizing. My memory for that? A notebook and a pencil with a good eraser.

“Memory,” as Frederic Bartlett said all the way back in 1932, “is reconstructive rather than reproductive.”

Should we “believe” whosoever, unconditionally, based solely on memory without corroborating evidence? In day to day life, when we’re dealing with other kinds of memory, we have to at times; but in the case of medicine or law, thousands falsely accused of Satantic ritual abuse and 254 Americans rescued from death sentences, based on “vivid memories,” say “no.”

For the record, number 7 (above) is about depersonlization. It could describe pretty much anyone involved in a Twitter feud. The associated derealization—the sense that something is not real—as some people transiently experience when they hear of the sudden death of a loved one—is not dissociation. Number 2 is amnesia. Forgetting things is amnesia? I’ve had amnesia approximately 20 times today and it’s not even 1 PM. On absorption: “the term ‘absorption’ refers to the tendency to become immersed in a single stimulus, either external (e.g., a movie or a book) or internal (e.g., a thought or an image), while neglecting other stimuli in the environment. According to Tellegen and Atkinson (Tellegen Absorption Scale; TAS, 1974), it represents an inclination to enter states of ‘total attention.’” I’m one absorptive, dissociative mf when I’m writing!

When Patahis et al compared DES scores with TAS scores (see above), they found in their first session a less than .7 correlation on absorption (and less than .5 on depersonalization, and less than .4 on amnesia). This, again, suggests either flaws in the tests, flaws in the concept of dissociation as described, or both. The sub-scales, if you give them charitable credence, are insufficiently correlated to be combined into a general category called dissociation.

Something else that happens during these kinds of tests—as demonstrated in experiments— is “anchoring.” That is, once the respondent checks 30%, for example, he or she will be more prone to check 30% more frequently as they progress down the sheet. If the respondent “anchors” high on the scale at the beginning, he or she is more apt to receive a higher dissociation score, but not because he or she is more “dissociative.”

Okay, enough shade on dissociation alone. Let’s turn to dissociation and trauma, even pretending that we don’t already have doubts about dissociation as a valid scientific category (or those reservations about metaphorical trauma).

Many practicing clinicians believe:

(1) Trauma causes dissociation. For the record, the words certainty and cause are Really Big Words in rigorous scientific practice, requiring a lot of highly exacting experimentation. That’s why science is too small to represent truth, but strongly disciplined (when done properly) in ruling out variables before reaching conclusions.

(2) Trauma can and does, in many cases, cause amnesia. Amnesia (noun): Partial or total loss of memory, usually resulting from shock, psychological disturbance, brain injury, or illness.. In the popular mind, amnesia is forgetting who you are (Jason Bourne). Let’s see what’s assumed here. First, “memory” as some immaculate category instead of the complex landscape we described earlier. I’m nitpicking a teeny bit, but when you think of “partial or total loss of memory,” as a pathological state, then we’re all implicated, because partially and totally forgetting things is actually a characteristic of every living person.

(Remembering everything sounds like a nightmare to me.)

Following on, it says, “usually resulting from shock, psychological disturbance, brain injury, or illness.” Okay, now we’ve gone in a circle.

(3) Early childhood abuse, especially of a sexual nature, can and often does result in severe dissociation, including “dissociative identity disorder.” This assumes such a disorder is an actual medical pathology instead of a media-assisted species of malingering, built up in suggestive feedback loops between patients and those clinicians who do actually believe it, or who stand to profit from it.

I have to ask the question, how do we distinguish between “amnesia” and plain errors in remembering? I also have to point out that, in studies, corroborated sexual abuse prior to age 7 fails to appreciably correlate with either so-called cases of dissociative identity disorder (DID, multiple personalities). Nor do “trauma” indices appreciably correlate with DID.

The assumptions of clinicians and the public about DID are arguments from authority, based on faulty terms and premises, buttressed by an immense volume of publication in journals, books, popular periodicals, and social media. Advocacy, here, precedes rigorous study, and becomes a form of confirmation bias and group think. The notion is further buttressed by the entirely justified desire to end actual child abuse, which can mislead credulous people into conflating rejection of this diagnosis with somehow harming children.

. . . literally . . .

In the 1980s and 1990s, challenges to this constellation of ideas around post-traumatic dissociation led to a minor culture war, polarizing opinions around it. In this corner, The Recovered Memory Movement . . . in this corner, False Memory Theory! This is how nuance is lost, and both sides in the low intensity culture wars transition from receptive to tactical, from critical to antagonistic. These kerfuffles between various psychologists were called “the memory wars.” Which is not to say that one side in such a conflict doesn’t have better evidence, or that perhaps, in some cases, certain ideas put into practice do actual harm.

Referring back to mimesis: the incorporation or assimilation of ideas and actions through imitation (a bit more complex than mere imitation) (see link here). One form of mimesis is the (sometimes imperfect) imitation of cultural archetypes. One example is the surge in children and adolescents—especially girls—reporting that they suffer from gender dysphoria, or the surge in mass shootings, or the digitally-fueled teen suicide and pregnancy pacts of the mid-2000s.

Beanie Baby crazes and Cabbage Patch dolls, all of us have some memory of mimetic outgrowths—some relatively benign, some horrifying. (See also social cognitive theory.)

Digital media has become a force multiplier for the worst of this tendency. I did a search on YouTube during my research on “dissociative identity disorder,” which I immediately regretted, because the algorithm flooded me with videos in support of the diagnosis and not a little complete lunacy . . . all with about a jillion views each. (Moral of the story: (1) YouTube distorts reality and (2) learn how to erase the history and tell YouTube not to send you to that channel.) One researcher counted, and the number of skeptical videos on YouTube about DID were 2.5 percent of the total. The fact that’s its listed in the DSM—which people assume to be a scientific document, instead of a consensus document—only makes matters worse.

It is my belief that dissociative identity disorder is a mimetic phenomenon, one seeded into the public consciousness by pop media and nourished by “clinicians.”

The very nature of consciousness—or awareness that entails the ongoing synthesis of perception, introspection, interpretation, correction, remembering, habituation, etc., combined as phenomenal experience, is such that, except in cases of injury (brain damage) or disease (schizophrenia), a waking “break” in consciousness, between “personalities,” would require a nearly instantaneous transformation of not only every aspect of the person from autonomic physical processes through interpretive symbolic cognition, but of the entire surrounding environment (which constitutes an aspect of phenomenal experience) as well.

Shirley Ardell Mason, upon whom Sybil was based, was diagnosed (by psychiatrist Cornelia Wilbur) with 16 “alters” (alternate personalities). There was never any evidence, by the way, that she had suffered from severe child abuse. This association was developed later on and apart from her case.

Investigative journalist Debbie Nathan (who also studied the “Satanic ritual abuse” panic) studied the case in great detail and published a book which concluded that Mason, who had suffered from mental and physical health breakdowns most of her life, never displayed or reported these “alters” until after she came into contact with Wilbur. Columbia Professor of Psychiatry (and hypnotherapist) Herbert Spiegel, who had examined Mason extnsively with Wilbur, was likewise convinced that Wilbur has engaged in directive therapy which implanted the diagnosis. It seems very likely that Mason was, like her mother before her, suffering from schizophrenia compounded with some other physical maladies (including pernicious anemia—she was a devout Seventh Day Adventist vegetarian). No Hollywood blockbuster was ever made of the skeptics; but after Sybil’s Hollywood release, there was a surge in patients seeking help for their “split personalities.”

Back in my drinking days, people would probably tell you my personality changed after about the third drink, but I didn’t change my name and autobiography. (I’m so glad that’s over!)

Numan Gharaibeh, MD, Staff psychiatrist in Department of Psychiatry, Danbury Hospital (CT) said that dissociative identity disorder should be removed from the DSM-V (“Dissociative identity disorder: Time to remove it from DSM-V?” Current Psychiatry, September 2009). The compromise between DID advocates and skeptics (that consensus document thing again) to include DID in the DSM was, according to Gharaibeh, “an equal footing fallacy,” what is more popularly called “both-side-ism.” He cited Piper and Merskey’s extensive literature review (82 citations), which concluded (1) there is categorically no proof that DID results from childhood trauma or that DID cases in children are almost never reported, an (2) there is “consistent evidence of blatant iatrogenesis” in the practice of some DID proponents. Gharaibeh describes the DSM’s definition of DID as “a tautology.” (« yep, yep, yep)

I should also remind readers—as we’ve covered a good deal of territory—that DID, by definition, is actually a sub-set pf PTSD.